Introduction

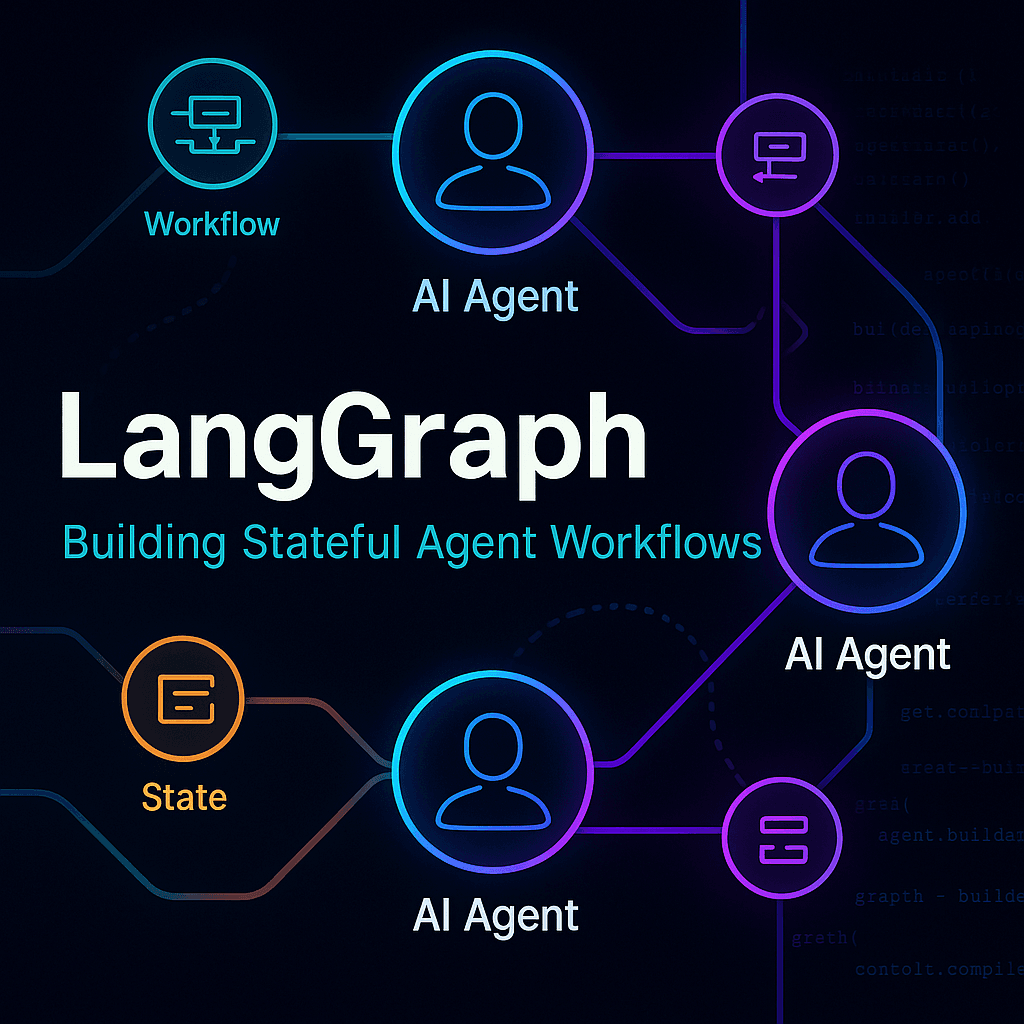

In the fast‑evolving world of AI, building “just a chatbot” is no longer enough. Instead, organizations are moving toward stateful, long‑running, multi‐actor agents that operate like systems of reasoning, decision making, and orchestration. Enter LangGraph — an open‑source framework designed to support exactly these complex workflows.

Created by LangChain (the same organization behind the popular LLM application‑framework), LangGraph is positioned as the “next chapter” in agentic AI: enabling you to build robust agents, workflows, and orchestration layers rather than just prompt‑chains.

In this blog post, we’ll go into a detailed look at:

What LangGraph is and why it matters

The core features and architecture

How it compares to other frameworks

Typical use‑cases and when you should use it

A basic “getting started” snippet

Key considerations & challenges

What is LangGraph?

At its core, LangGraph is:

“a low‑level orchestration framework for building, managing, and deploying long‑running, stateful agents.”

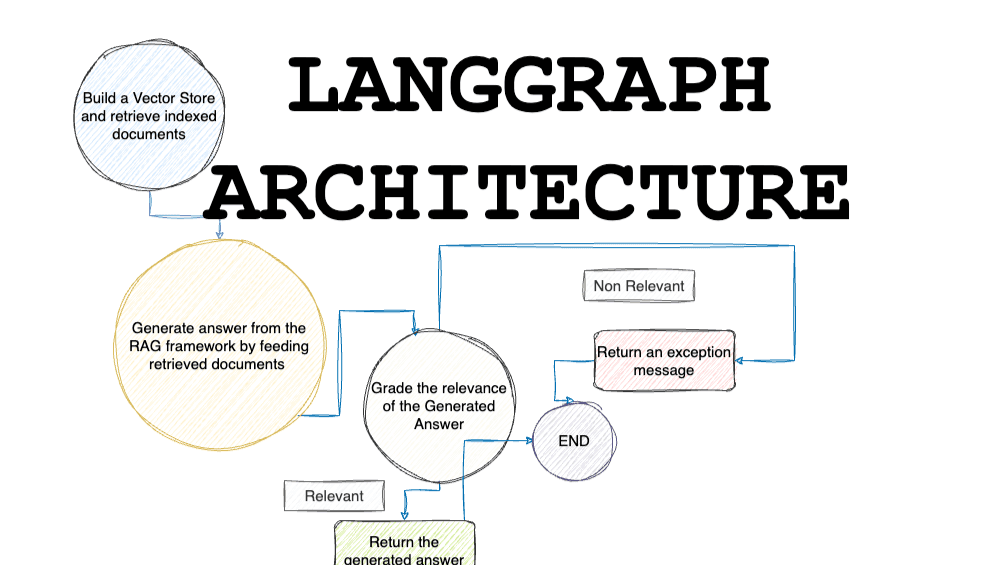

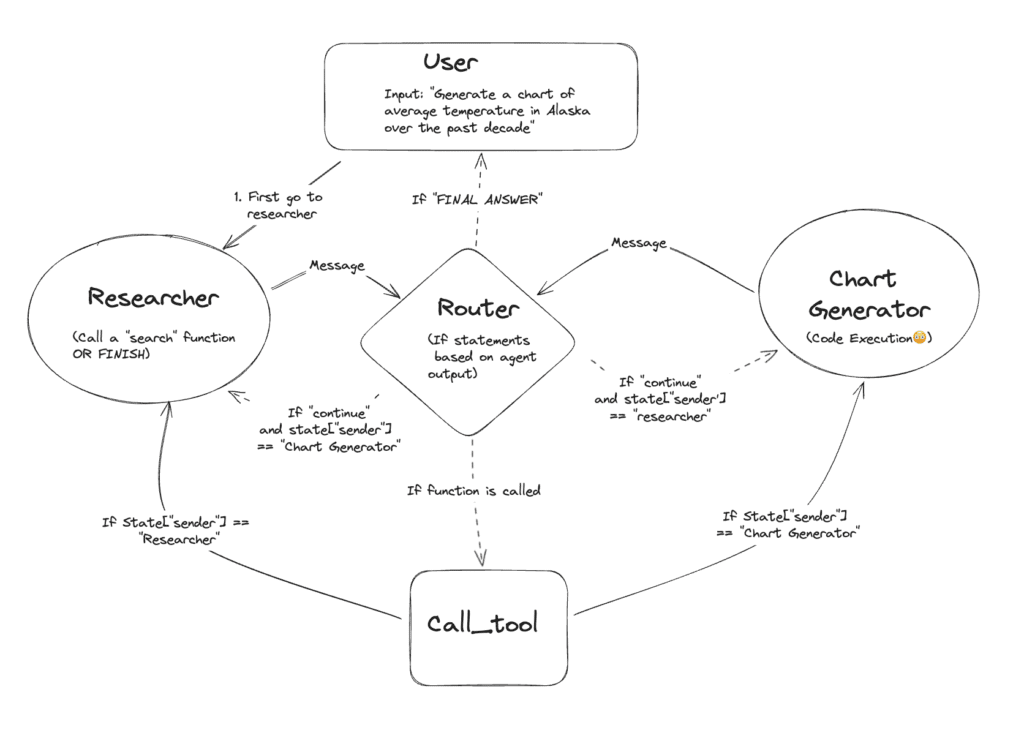

In simpler terms: rather than just writing a chain of prompts or calls, LangGraph lets you think of your AI agent as a graph of states, nodes, transitions, and actors, where you can build workflows, manage memory, handle interrupts, human‑in‑the‑loop, persistence, and more.

Key aspects:

- Graph‑based workflows: Construct agents as nodes and edges (states & transitions) rather than just linear flows.

- Persistence & durability: Agents can resume from failures, maintain state, and run for extended periods.

- Memory & human‑in‑the‑loop: Short‑term reasoning memory + long‑term across sessions; ability to inspect/steer state.

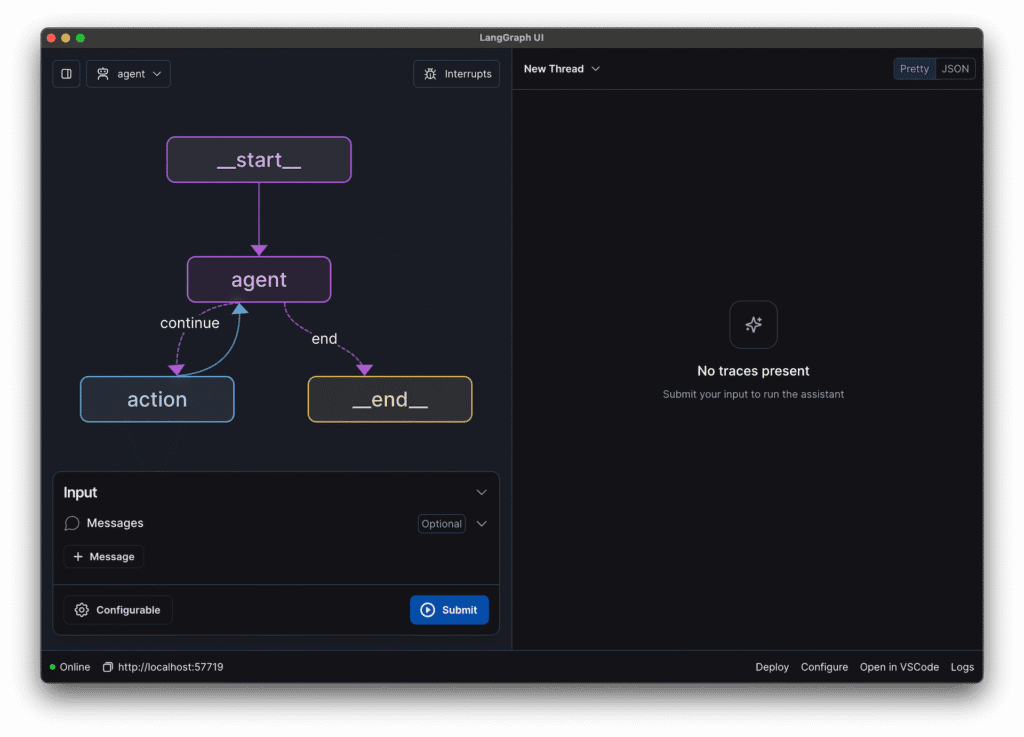

- Observability & debug tools: With integrations (e.g., with LangSmith) for tracing, monitoring, and execution history.

- Production deployment support: Infrastructure for scale, streaming, background jobs, and persistent tasks.

Why does it matter & what problem does it solve?

LLM‑based systems increasingly require more than “input → model → output”. Consider scenarios like:

Multi‑step task completion (book travel, coordinate across tools, modify based on feedback)

Long‑running sessions with memory (chatbots that remember past sessions)

Agents that orchestrate external tools/APIs (databases, web services)

Branching workflows depending on decisions (escalation, verification)

Traditional “chain of prompts” frameworks (including early versions of LangChain) can start to buckle under these demands. You lose visibility into state transitions, have difficulty scaling, debugging, and persisting across sessions. LangGraph addresses these gaps by offering workflow orchestration, state management, and graph‑structured logic. For example:

You can persist an agent’s conversation state, checkpoints, and resume after a crash.

You can inspect how your agent moved through states (which node triggered, what memory was used) via observability.

You can integrate human‑in‑loop: if the agent gets stuck, you can intervene/edit the state.

For companies building production‑grade AI agents (customer support bots, process automation, tool orchestration), these capabilities are major differentiators.

Core Architecture & Features

Here’s a breakdown of the major components/features you’ll interact with in LangGraph:

| Feature | Description |

|---|---|

| StateGraph / Nodes & Edges | You define Nodes (states, actors) and transitions (edges) in a graph. An agent traverses the graph based on logic or conditions. |

| Runtime & Persistence | Execution runtime supports streaming, checkpointing, and durable execution (so agents can resume) |

| Memory | Short‑term working memory (within a flow) and long‑term memory (across sessions) are built‑in. |

| Tool/Actor integration | Nodes may correspond to tools, APIs, or functions (external or internal). The agent can call them, branch based on results. Medium+1 |

| Human‑in‑Loop / Interrupts | You can inject human approval or intervene in a workflow. |

| Debugging & Observability | Using companion tooling (like LangSmith), you can inspect execution traces, states, transitions, and metrics. |

| Deployment & scaling | Supports deployment to production, monitoring, background tasks, and horizontal scaling. |

How to Get Started – Basic Code Example

Here’s a simplified snippet (in Python) to illustrate how you might begin with LangGraph. (From repository) GitHub+1

from langgraph.prebuilt import create_react_agent

from langchain_anthropic import ChatAnthropic

def get_weather(city: str) -> str:

"""Get weather for a given city."""

return f"It's always sunny in {city}!"

llm = ChatAnthropic(model="claude‑3‑7‑sonnet‐latest")

agent = create_react_agent(

model=llm,

tools=[get_weather],

prompt="You are a helpful assistant"

)

result = agent.invoke({"messages":[{"role":"user","content":"what is the weather in Delhi?"}]})

print(result)

Beyond this, you’d build a StateGraph, define nodes, edges, checkpointers etc. For example:

from langgraph import StateGraph

builder = StateGraph(State)

builder.add_node("assistant", AssistantNode(...))

builder.add_node("tools", ToolNode(...))

builder.add_edge("assistant", "tools", condition=tools_needed)

builder.add_edge("tools", "assistant")

graph = builder.compile(checkpointer=my_memory_store)

You can then run the graph, stream results, inspect states etc.

Use Cases & Industry Applications

Here are some scenarios where LangGraph shines:

Customer‑support agents: Agents that retrieve customer history, call APIs (CRM, ticketing), escalate, and remember past interactions.

Process automation: E.g., travel booking: verify user identity → check availability → call APIs → confirm → send receipt. The graph handles branching, errors, retries, and memory.

Tool orchestration: Agents that combine LLM reasoning + tool invocation (web search, calculator, database queries) + state management.

Multi‑actor workflows: Multiple agents or “actors” cooperate (e.g., a check‑agent, a decision‑agent, a human review node). Graph structure enables that.

Long‑running tasks: Workflows that may take hours/days, need persistence, resume, and human intervention. LangGraph enables durable execution.

As one article puts it:

“LangGraph uses graph‑based architectures to model and manage the intricate relationships between various components of an AI agent workflow.”

How LangGraph Compares With Alternatives

Traditional frameworks, like just using LangChain agent abstractions, are great for simpler flows. But when you need persistent state, branching workflows, complex orchestration -> they can become hard to maintain.

LangGraph brings workflow orchestration, graph logic, state persistence, and observability.

Compared with low‑code agent platforms (cloud vendor offerings), you get more control and flexibility (but slightly more engineering).

Example: Microsoft AutoGen supports multi‑agent but may abstract some lower‑level orchestration; LangGraph lets you control the “graph” intimately.

When to Use LangGraph & When Not

Use it when:

You have multi‑step workflows, not just stateless prompt–response

You need state memory across sessions

You need durability, retries, and recovery from failures

You need observability (debugging, tracing) into what the agent did

You need to integrate external tools/APIs + orchestration

Avoid/consider simpler alternatives when:

Your use‑case is simple: one prompt → single response, no memory needed

You don’t have the resources to develop a more complex architecture

You need an ultra-quick prototype with minimal engineering (then a simpler chain may suffice)

Key Considerations & Challenges

Engineering complexity: Although LangGraph offers power, it also requires more design: nodes/edges, memory, checkpointing, and state management.

Costs/Resources: Running long‑running agents, memory storage, and monitoring — adds infrastructure cost.

Data & Privacy: If you persist memory across sessions, you need to manage user data, consent, and deletion.

Tooling maturity: While LangGraph is open‑source and advancing, you may need to build some custom pieces.

Governance: With complex multi‑actor flows, ensuring correctness, auditability, and security can be harder.

Learning curve: Developers must shift from a “prompt chain” mindset to a “state graph” mindset.

Conclusion

LangGraph represents a meaningful leap for teams building production‑grade AI agents. If your application goes beyond single‑turn chat, needs memory, tools, orchestration, reliability — LangGraph is a strong candidate.

By conceptualizing your agents as graphs of states and transitions, and giving you persistence, observability, and control, it helps bridge the gap between “cool LLM demo” and “robust agent system”.

If you’re building a tech blog for developers (which you are), I’d recommend linking to the official docs, showing a code snippet like above, and maybe walking through a small sample (e.g., booking assistant). The community examples (tutorials) help too.